| Bytes | Lang | Time | Link |

|---|---|---|---|

| 299 | yacc GNU Bison | 250330T175422Z | ais523 |

| nan | Rust | 250507T124323Z | Deadbeef |

| 004 | BrainChild | 250331T044939Z | ATaco |

| 007 | Swift for wasm | 230303T202655Z | Bbrk24 |

| 042 | Rust | 230302T134656Z | JSorngar |

| 054 | C | 211124T064713Z | Random H |

| nan | Myxal 0.7.1 | 220528T214331Z | Seggan |

| 022 | Julia | 211124T165443Z | MarcMush |

| nan | 211124T154012Z | Tynuk | |

| nan | 210216T224443Z | mystery | |

| nan | Boo | 171024T161439Z | user7520 |

| nan | C | 170225T113929Z | H2CO3 |

| 367 | C | 160121T034127Z | Zakipu |

| nan | C | 160112T001600Z | Digital |

| nan | Scala 70 byte source | 160117T013505Z | Rex Kerr |

| nan | Java | 160113T222102Z | Sleafar |

| 028 | C# | 160112T013934Z | Vladimir |

| nan | Python 3 | 160114T085859Z | Aleksi T |

| nan | 160112T202722Z | Joshua | |

| 005 | Plain old C preprocessor 214 bytes input | 160114T075229Z | Thomas P |

| nan | C++ | 160112T175016Z | Toby Spe |

| 320 | ASM | 160112T014044Z | viraptor |

| 236 | Boo | 160112T163438Z | Mason Wh |

| 090 | Kotlin | 160112T183057Z | TheNumbe |

| 214 | C++ | 160112T182600Z | Ben Voig |

| nan | 160112T165048Z | Warbo |

yacc (GNU Bison), 299 bytes

%%

P:a|b|c|d|e|f|g|h|i|j|k|l|m|n|o|p|q|r|s|t|u|v|w|x

a:S a|N b

b:S b|N c

c:S c|N d

d:S d|N e

e:S e|N f

f:S f|N g

g:S g|N h

h:S h|N i

i:S i|N j

j:S j|N k

k:S k|N l

l:S l|N m

m:S m|N n

n:S n|N o

o:S o|N p

p:S p|N q

q:S q|N r

r:S r|N s

s:S s|N t

t:S t|N u

u:S u|N v

v:S v|N w

w:S w|N x

x:N a|S:'0'N:'1'

I haven't managed to run the full version of this compile on my computer because I don't have enough RAM (and the compile would be expected to take hours), but the output size grows exponentially, and going as far as x is definitely enough (going as far as w would be close to the 4GiB target but I don't think it quite reaches it).

I mentioned GNU Bison in the title because it's the yacc implementation I used for testing, but the same program (maybe with a couple more lines) is likely to cause an exponential blowup on other yacc implementations too, as long as they are able to create the program without running past hardcoded limits on the size of the output or something that correlates to that. (It would be possible for a yacc implementation to optimise in a way that prevents an exponential blowup on this sort of input, but don't expect any such yacc implementations to do that in practice.)

Output sizes for smaller versions of this program, with fewer lines, using GNU Bison 3.8.2:

a-n 5167494

a-o 11058187

a-p 23580540

a-q 50106053

a-r 106010181

Explanation

Yacc is a language for describing parsers; you give it a description of the grammar of a language, and yacc generates a parser for it. Yacc's semantics require the input to be parsed left to right.

The grammar in this program can be summarised as "parse a string of 0s and 1s, such that for each suffix of the input that starts with 0, the number of 1s in that suffix is not divisible by 24". However, because yacc parses from left to right, a bit of subtlety is needed to express the situation that the validity of a 0 depends on the elements to its right (rather than its left).

The basic trick is: if the number of 1s in the input modulo 24 were known in advance, it would be possible to determine whether or not a 0 is valid by counting the 1s to the left of the 0, rather than counting the 1s to the right. As such, the grammar is defined by (effectively) creating 24 threads, one for each possible 1-count-modulo-24, and having each of those threads check the input to see if it would be valid assuming that the thread's assumption about the total 1 count is correct. At the end of the input, the total number of 1s is known, so we can just ask the thread that started with a correct assumption whether the input is correct or not.

Why does this lead to an exponential blowup? Almost all yacc implementations compile a program using a virtual machine that works using a stack together with a finite-state machine (known as a "push-down automaton") – typically the virtual machine is interpreted, although at least one implementation instead compiles it (using the processor's call stack as the stack of the virtual machine). However, for this particular program, the stack isn't required and none of the things that yacc implementations use their stack for are present, so the grammar will in practice end up being parsed entirely using the finite state machine. It's certainly possible to do that! You just need 224 states, because each combination of "this thread thinks the input could be valid given its starting assumption" and "that thread thinks the input must be invalid given its starting assumption" needs to be assigned to a state (finite-state machines don't have variables, or indeed anywhere to store data other than their instruction pointer).

This grammar is within the set of grammars that yacc implementations have to be able to handle (according to the specification), so if they target the usual virtual machine (and most don't have any other back-ends), and use the normal algorithm, there will necessarily be an exponential blowup during the compilation process – the program is valid but the virtual machine can't represent it without using exponentially many states.

(As it happens, it is actually possible to implement this program using a push-down automaton without an exponential blowup: you store the entirety of the input on the stack while counting the 1s, then read the input back from the stack and parse it backwards. But an implementation did that would fail to comply with the yacc standard, which disallows reading more than one token beyond the token that causes an error: it's certainly possible to imagine an input that causes all 24 threads to report an error before the end of input is reached, and the "store the input backwards" technique would read too far.)

The program itself is fairly self-explanatory: the first line starting P creates the threads, and then everything else is just a state machine (e.g. the d line says "In state d, either read an S and remain in state d, or read an N and go to state e.") The only subtlety is that in order to golf off a couple of bytes per line, instead of using the '0' and '1' characters directly, I defined them as S and N respectively (those definitions, S:'0'N:'1', are at the end of the program, and don't require whitespace before or between them). The definition of x is x:N a| and reads as "In state x, either read an N and go to state a, or accept the input if at end of input" – if the acceptance condition in state x is not met, then the input will be rejected.

Rust, 29+16=45 bytes, 10,125,006,610 bytes (9.43 GiB) executable

static A:&[u8]=&[0;9e9 as _];

Compile command:

rustc --crate-type lib test.rs

Anything above (1e10) would generate the following error:

error: failed to build archive at `libtest.rlib`: Archive member test.test.d32f52fcc5ed38cf-cgu.0.rcgu.o is too big

error: aborting due to 1 previous error; 1 warning emitted

BrainChild, 4 Gibibytes

reserve 0x3ffffecb;

Try It Online! (Number dialed down for demonstration purposes)

Reserves exactly enough memory to completely fill the 4GB Program Memory that Brainchild offers.

Similarly, the following can be used to unroll to arbitrarily large compiled binaries

macro ('R' (number) (expression)) {if (const $1) {$2; R ($1-1) $2}}

R 0x000000FF int j;

Swift for wasm, 7 byte source, 6326162 byte (6.3MB) wasm file

Not winning, but I'm adding this answer for its sheer absurdity:

print()

The Swift compiler does almost no dead code stripping (at least when compiling for wasm), so you get the entire standard library. The arguments to the print statement, any loops or recursion I added, etc barely seemed to matter. However, removing the print statement entirely shrunk the code size by a noticeable amount.

For reference, I used the wasm file generated by https://swiftwasm.org/, which seems to be using Swift 5.6 at the time of writing.

Rust, 42 bytes, 1.31 GB executable

fn main(){print!("{:?}",[0u8;9999999999])}

Does not require any specific compilation flags, you can just compile with rustc cbomb.rs.

Arrays of the format [x; s] where x is Copy and s has type usize and both have a value known at compile time are constructed during compilation and embedded in the binary, though the final executable is smaller than I would expect from this and I do not know what mechanism does that.

I couldn't make the size much larger than this on my laptop without LLVM or the linker crashing from non-language limitations.

Rust, 42 bytes, 18(?) EB executable (on a 64-bit system)

fn main(){print!("{:?}",[0u8;usize::MAX])}

The array would have a size of 18 EB, but the final executable would probably be smaller. I can't afford this much RAM and storage space, so I haven't been able to test this. Maybe you can if you are in the future.

C, 54 bytes, ridiculously large executable

#include <inttypes.h>

uint64_t main[(uint64_t)~0]={~0};

Not exactly original, but much more devastating.

Inline but not portable version

unsigned long long main[~0ull]={~0};

I am NOT going to sacrifice my laptop just for this.

Explanation

uint64_t, unsigned long long, they're both unsigned 64-bit integers.

The tilde (~) is the bitwise NOT operator in C (it flips the bits of a value).

(uint64_t)0 is 0000000000000000000000000000000000000000000000000000000000000000 in binary.

By applying the bitwise NOT operator, we get a... big number (\$2^{64}-1\$).

Credits

Big thanks to user Digital Trauma for his original implementation. This answer is really just an expansion from that.

Myxal 0.7.1, 46 source bytes + 2 bytes flag = 48 bytes, 2.042 MB compiled

~~~~~~~y¢aaaa¢bbbb¢cccc¢dddd¢eeee¢ffff¢gggg¢hh

Simple node alias recursion bomb. Adding more aliases make the JVM complain of a too large main method.

~~~~~~~y # A bunch of filter modifiers on the element 'y'

¢a # Alias that to 'a'

aaa¢b # 3 'a's = 'b' and so on...

bbb¢c

ccc¢d

ddd¢e

eee¢f

fff¢g

ggg¢h

h # Run that final 'h'

Compiled with the -O flag to disable optimization, which also disables shrinking for some reason.

Julia, 22 bytes (in memory)

0:9^9 .|>i->@eval 2^$i

It's quite easy to make a compilation bomb in Julia, it can easily happen accidentally. Here we use the fact that a^i has some trickery when i is a litteral integer, that allows a^2 to be turned into a*a, and a^-1 into inv(a). It means that there is a new compiled method of litteral_pow being compiled for each i. I'm pretty sure this would be at least 4GB but I don't know how to check it. This is only compiled in memory and not saved in a file though

Julia, 114 bytes (output in a .ji file)

using Pkg

pkg"generate A"

write("A/src/A.jl","module A

!(::Val{x}) where x=x

.!Val.(1:9^9)end")

pkg"dev A"

using A

(can't) Try it online!

Try A online!

To save compiled code to a file, the function must be in a package, therefore we create a package A that has the culprit (the function !). Val(i) is of type Val{i}, so a new method is compiled for each i. The output file will be in ~/.julia/compiled/<version>/A/xxx.ji

In my testing, each additional method adds at least 150 bytes (and growing, 182MB for 1M methods), which means 25M would be enough

in Julia 1.7, +2 bytes because pkg"dev ./A" is needed

Julia, 117 bytes (without writing files)

this is basically the same as the above, but with the files already there. This is slightly longer because of the uuid needed in Project.toml (this is taken care of in pkg"generate A")

file structure

A

├── src

│ └── A.jl

├── Project.toml

└── a.jl

Project.toml, 52 bytes

name="A"

uuid="8945f399-ba5e-44d3-9e17-ab2f7e467331"

src/A.jl, 47 bytes

module A

!(::Val{x}) where x=x

.!Val.(0:9^9)end

a.jl, 7 bytes

using A

command line options (in the A folder), +11 bytes

julia --project=. a.jl

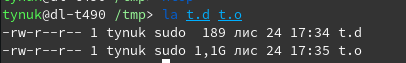

Dlang (ldc compiler)

command to build ldc -c t.d

GBS is count of gigabytes;

code of t.d:

import std;

enum GBS = 1;

void main(){

static foreach(i; 0..2* GBS){

mixin(text(q{static immutable a}, i,q{ = uint.max.BigInt << uint.max;}));

mixin(text(q{a}, i, q{.writeln;}));

}

}

PHP 7.1:

const X="x",Y=X.X.X.X.X.X.X.X,Z=Y.Y.Y.Y.Y.Y.Y.Y,A=Z.Z.Z.Z.Z.Z.Z.Z,B=A.A.A.A.A.A.A.A,C=B.B.B.B.B.B.B.B,D=C.C.C.C.C.C.C.C,E=D.D.D.D.D.D.D.D,F=E.E.E.E.E.E.E.E,G=F.F.F.F.F.F.F.F;

. is the concatenation operator, and PHP's compiler will try to do constant folding where possible, so it will construct a huge string and store it in PHP's internal bytecode. (I think you can get this written to a file with newer versions.) This is only as efficient as a classic XML entity bomb unfortunately. You'd need a few more repetitions to get to the gigabyte range.

The interesting part is that by default the worst that'll happen is seeing an error like:

Fatal error: Allowed memory size of 134217728 bytes exhausted (tried to allocate 50331680 bytes) in Command line code on line 1

PHP's web-orientedness means it has a memory limit by default!

Boo, way more than you can expect from this

macro R(e as int):for i in range(9**e):yield R.Body

x = 0

R 99:++x

C, 284 bytes + 2 for the -c in gcc bomb.c -o bomb.o -c; output: 2 147 484 052 bytes

#define a 1,1,1,1,1,1,1,1,1,1,1,1,1,1,1,1

#define b a,a,a,a,a,a,a,a,a,a,a,a,a,a,a,a

#define c b,b,b,b,b,b,b,b,b,b,b,b,b,b,b,b

#define d c,c,c,c,c,c,c,c,c,c,c,c,c,c,c,c

#define e d,d,d,d,d,d,d,d,d,d,d,d,d,d,d,d

#define f e,e,e,e,e,e,e,e,e,e,e,e,e,e,e,e

__int128 x[]={f,f,f,f,f,f,f,f};

C, 26 byte source, 2,139,103,367 byte output, valid program

const main[255<<21]={195};

Compiled using: gcc cbomb.c -o cbomb (gcc version 4.6.3, Ubuntu 12.04, ~77 seconds)

I thought I'd try to see how large I could make a valid program without using any command line options. I got the idea from this answer: https://codegolf.stackexchange.com/a/69193/44946 by Digital Trauma. See the comments there as to why this compiles.

How it works: The const removes the write flag from the pages in the segment, so main can be executed. The 195 is the Intel machine code for a return. And since the Intel architecture is little-endian, this is the first byte. The program will exit with whatever the start up code put in the eax register, likely 0.

It's only about 2 gig because the linker is using 32 bit signed values for offsets. It's 8 meg smaller than 2 gig because the compiler/linker needs some space to work and this is the largest I could get it without linker errors - ymmv.

C, (14 + 15) = 29 byte source, 17,179,875,837 (16 GB) byte executable

Thanks to @viraptor for 6 bytes off.

Thanks to @hvd for 2 bytes off and executable size x4.

This defines the main function as a large array and initialises its first element. This causes GCC to store the entire array in the resulting executable.

Because this array is bigger than 2GB, we need to provide the -mcmodel=medium flag to GCC. The extra 15 bytes are included in the score, as per the rules.

main[-1u]={1};

Don't expect this code to do anything nice when run.

Compile with:

gcc -mcmodel=medium cbomb.c -o cbomb

It took me a while to get round to testing @hvd's suggestion - and to find a machine with enough juice to handle it. Eventually I found a old non-production RedHat 5.6 VM with 10GB RAM, 12GB swap, and /tmp set to a large local partition. GCC version is 4.1.2. Total compile time about 27 minutes.

Due to the CPU and RAM load, I recommend against doing this compile on any remotely production-related machine.

Scala - 70 byte source, 22980842 byte result (after jar)

import scala.{specialized => s}

class X[@s A, @s B, @s C, @s D, @s E]

This produces 95 (about 59,000) specialized class files, which pack into a jar of about 23 MB. You can in principle keep going if you have a filesystem that can handle that many files and enough memory.

(If the jar command must be included, it's 82 bytes.)

Java, 450 + 22 = 472 bytes source, ~1GB class file

B.java (golfed version, warning during compilation)

import javax.annotation.processing.*;@SupportedAnnotationTypes("java.lang.Override")public class B extends AbstractProcessor{@Override public boolean process(java.util.Set a,RoundEnvironment r){if(a.size()>0){try(java.io.Writer w=processingEnv.getFiler().createSourceFile("C").openWriter()){w.write("class C{int ");for(int i=0;i<16380;++i){for(int j=0;j<65500;++j){w.write("i");}w.write(i+";int ");}w.write("i;}");}catch(Exception e){}}return true;}}

B.java (ungolfed version)

import java.io.Writer;

import java.util.Set;

import javax.annotation.processing.AbstractProcessor;

import javax.annotation.processing.RoundEnvironment;

import javax.annotation.processing.SupportedAnnotationTypes;

import javax.annotation.processing.SupportedSourceVersion;

import javax.lang.model.SourceVersion;

import javax.lang.model.element.TypeElement;

@SupportedAnnotationTypes("java.lang.Override")

@SupportedSourceVersion(SourceVersion.RELEASE_8)

public class B extends AbstractProcessor {

@Override

public boolean process(Set<? extends TypeElement> annotations, RoundEnvironment roundEnv) {

if (annotations.size() > 0) {

try (Writer writer = processingEnv.getFiler().createSourceFile("C").openWriter()) {

writer.write("class C{int ");

for (int i = 0; i < 16380; ++i) {

for (int j = 0; j < 65500; ++j) {

writer.write("i");

}

writer.write(i + ";int ");

}

writer.write("i;}");

} catch (Exception e) {

}

}

return true;

}

}

Compilation

javac B.java

javac -J-Xmx16G -processor B B.java

Explanation

This bomb uses Annotation Processors. It needs 2 compile passes. The first pass builds the processor class B. During the second pass the processor creates a new source file C.java, and compiles it to a C.class with a size of 1,073,141,162 bytes.

There are several limitations when trying to create a big class file:

- Creating identifiers longer than about 64k results in:

error: UTF8 representation for string "iiiiiiiiiiiiiiiiiiii..." is too long for the constant pool. - Creating more than about 64k variables/functions results in:

error: too many constants - There is also a limit of about 64k for the code size of a function.

- There seems to be a general limit (bug?) in the java compiler of about 1GB for the

.classfile. If I increase16380to16390in the above code the compiler never returns. - There is also a limit of about 1GB for the

.javafile. Increasing16380to16400in the above code results in:An exception has occurred in the compiler (1.8.0_66). Please file a bug ...followed by ajava.lang.IllegalArgumentException.

C#, about 1 min to compile, 28MB output binary:

class X<A,B,C,D,E>{class Y:X<Y,Y,Y,Y,Y>{Y.Y.Y.Y.Y.Y.Y.Y.Y y;}}

Adding more Y's will increase the size exponentially.

An explanation by Pharap as per @Odomontois' request:

This answer is abusing inheritance and type parameters to create recursion. To understand what's happening, it's easier to first simplify the problem. Consider class X<A> { class Y : X<Y> { Y y; } }, which generates the generic class X<A>, which has an inner class Y. X<A>.Y inherits X<Y>, hence X<A>.Y also has an inner class Y, which is then X<A>.Y.Y. This then also has an inner class Y, and that inner class Y has an inner class Y etc. This means that you can use scope resolution (.) ad infinitum, and every time you use it, the compiler has to deduce another level of inheritance and type parameterisation.

By adding additional type parameters, the work the compiler has to do at each stage is further increased.

Consider the following cases:

In class X<A> { class Y : X<Y> { Y y;} } type param A has a type of X<A>.Y.

In class X<A> { class Y : X<Y> { Y.Y y;} } type param A has a type of X<X<A>.Y>.Y.

In class X<A> { class Y : X<Y> { Y.Y.Y y;} } type param A has a type of X<X<X<A>.Y>.Y>.Y.

In class X<A,B> { class Y : X<Y,Y> { Y y;} } type param A is X<A,B>.Y and B is X<A,B>.Y.

In class X<A> { class Y : X<Y> { Y.Y y;} } type param A is X<X<A,B>.Y, X<A,B>.Y>.Y and B is X<X<A,B>.Y, X<A,B>.Y>.Y.

In class X<A> { class Y : X<Y> { Y.Y.Y y;} } type param A is X<X<X<A,B>.Y, X<A,B>.Y>.Y, X<X<A,B>.Y, X<A,B>.Y>.Y>.Y and B is X<X<X<A,B>.Y, X<A,B>.Y>.Y, X<X<A,B>.Y, X<A,B>.Y>.Y>.Y.

Following this pattern, one can only imagine1 the work the compiler would have to do to to deduce what A to E are in Y.Y.Y.Y.Y.Y.Y.Y.Y in the definition class X<A,B,C,D,E>{class Y:X<Y,Y,Y,Y,Y>{Y.Y.Y.Y.Y.Y.Y.Y.Y y;}}.

1 You could figure it out, but you'd need a lot of patience, and intellisense won't help you out here.

Python 3, 13 byte source, 9,057,900,463 byte (8.5GiB) .pyc-file

(1<<19**8,)*2

Edit: Changed the code to the version above after I realized the rules say output size beyond 4GiB doesn't matter, and the code for this one is ever so slightly shorter; The previous code - and more importantly the explanation - can be found below.

Python 3, 16 byte source, >32TB .pyc-file (if you have enough memory, disk space and patience)

(1<<19**8,)*4**7

Explanation: Python 3 does constant folding, and you get big numbers fast with exponentation. The format used by .pyc files stores the length of the integer representation using 4 bytes, though, and in reality the limit seems to be more like 2**31, so using just exponentation to generate one big number, the limit seems to be generating a 2GB .pyc file from an 8 byte source. (19**8 is a bit shy of 8*2**31, so 1<<19**8 has a binary representation just under 2GB; the multiplication by eight is because we want bytes, not bits)

However, tuples are also immutable and multiplying a tuple is also constant folded, so we can duplicate that 2GB blob as many times as we want, up to at least 2**31 times, probably. The 4**7 to get to 32TB was chosen just because it was the first exponent I could find that beat the previous 16TB answer.

Unfortunately, with the memory I have on my own computer, I could test this only up to a multiplier of 2, ie. (1<<19**8,)*2, which generated a 8.5GB file, which I hope demonstrates that the answer is realistic (ie. the file size isn't limited to 2**32=4GB).

Also, I have no idea why the file size I got when testing was 8.5GB instead of the 4GB-ish I expected, and the file is big enough that I don't feel like poking around it at the moment.

Here's my C answer from 2005. Would produce a 16TB binary if you had 16TB RAM (you don't).

struct indblock{

uint32_t blocks[4096];

};

struct dindblock {

struct indblock blocks[4096];

};

struct tindblock {

struct dindblock blocks[4096];

};

struct inode {

char data[52]; /* not bothering to retype the details */

struct indblock ind;

struct dindblock dint;

struct tindblock tind;

};

struct inode bbtinode;

int main(){}

Plain old C preprocessor: 214 bytes input, 5MB output

Inspired by my real-world preprocessor fail here.

#define A B+B+B+B+B+B+B+B+B+B

#define B C+C+C+C+C+C+C+C+C+C

#define C D+D+D+D+D+D+D+D+D+D

#define D E+E+E+E+E+E+E+E+E+E

#define E F+F+F+F+F+F+F+F+F+F

#define F x+x+x+x+x+x+x+x+x+x

int main(void) { int x, y = A; }

Experiments show that each level of #defines will (as expected) make the output approximately ten times larger. But since this example took more than an hour to compile, I never went on to "G".

C++, 250 + 26 = 276 bytes

template<int A,int B>struct a{static const int n;};

template<int A,int B>const int a<A,B>::n=a<A-1,a<A,B-1>::n>::n;

template<int A>struct a<A,0>{static const int n=a<A-1,1>::n;};

template<int B>struct a<0,B>{static const int n=B+1;};

int h=a<4,2>::n;

This is the Ackermann function implemented in templates. I'm not able to compile with h=a<4,2>::n; on my little (6GB) machine, but I did manage h=a<3,14> for a 26M output file. You can tune the constants to hit your platform's limits - see the linked Wikipedia article for guidance.

Requires -g flag to GCC (because it's all the debug symbols that actually consume any space), and a larger-than-default template depth. My compile line ended up as

g++ -ftemplate-depth=999999 -g -c -o 69189.o 69189.cpp

Platform information

g++ (Ubuntu 4.8.2-19ubuntu1) 4.8.2

Linux 3.13.0-46-generic #79-Ubuntu SMP x86_64 GNU/Linux

ASM, 61 bytes (29 bytes source, 32 bytes for flags), 4,294,975,320 bytes executable

.globl main

main:

.zero 1<<32

Compile with gcc the_file.s -mcmodel=large -Wl,-fuse-ld=gold

Boo, 71 bytes. Compile time: 9 minutes. 134,222,236 byte executable

macro R(e as int):

for i in range(2**e):yield R.Body

x = 0

R 25:++x

Uses a macro R (for Repeat) to cause the compiler to multiply the increment statement an arbitrary number of times. No special compiler flags are needed; simply save the file as bomb.boo and invoke the compiler with booc bomb.boo to build it.

Kotlin, 90 bytes source, 177416 bytes (173 KB) compiled JVM binary

inline fun a(x:(Int)->Any){x(0);x(1)}

fun b()=a{a{a{a{a{a{a{a{a{a{a{println(it)}}}}}}}}}}}

Technically, you could make this even longer by nesting the expression further. However, the compiler crashes with a StackOverflow error if you increase the recursion.

C++, 214 bytes (no special compile options needed)

#define Z struct X

#define T template<int N

T,int M=N>Z;struct Y{static int f(){return 0;}};T>Z<N,0>:Y{};T>Z<0,N>:Y{};T,int M>Z{static int f(){static int x[99999]={X<N-1,M>::f()+X<N,M-1>::f()};}};int x=X<80>::f();

It's a fairly straightforward two-dimensional template recursion (recursion depth goes as the square-root of total templates emitted, so won't exceed platform limits), with a small amount of static data in each one.

Generated object file with g++ 4.9.3 x86_64-pc-cygwin is 2567355421 bytes (2.4GiB).

Increasing the initial value above 80 breaks the cygwin gcc assembler (too many segments).

Also, 99999 can be replaced by 9<<19 or similar for increased size without changing the source code... but I don't think I need to use any more disk space than I already am ;)

If an output of more than 4GB is achieved (perhaps if somebody finds a turing complete preprocessor), the competition will be for the smallest source which produces a file of at least that size (it's just not practical to test submissions which get too big).

"Template Haskell" allows Haskell code to be generated at compile-time using Haskell, and is hence a turing complete pre-processor.

Here's my attempt, parameterised by an arbitrary numerical expression FOO:

import Language.Haskell.TH;main=print $(ListE .replicate FOO<$>[|0|])

The magic is the code inside the "splice" $(...). This will be executed at compile time, to generate a Haskell AST, which is grafted on to the program's AST in place of the splice.

In this case, we make a simple AST representing the literal 0, we replicate this FOO times to make a list, then we use ListE from the Language.Haskell.TH module to turn this list of ASTs into one big AST, representing the literal [0, 0, 0, 0, 0, ...].

The resulting program is equivalent to main = print [0, 0, 0, ...] with FOO repetitions of 0.

To compile to ELF:

$ ghc -XTemplateHaskell big.hs

[1 of 1] Compiling Main ( big.hs, big.o )

Linking big ...

$ file big

big: ELF 32-bit LSB executable, Intel 80386, version 1 (SYSV), dynamically linked, interpreter /nix/store/mibabdfiaznqaxqiy4bqhj3m9gaj45km-glibc-2.21/lib/ld-linux.so.2, for GNU/Linux 2.6.32, not stripped

This weighs in at 83 bytes (66 for the Haskell code and 17 for the -XTemplateHaskell argument), plus the length of FOO.

We can avoid the compiler argument and just compile with ghc, but we have to put {-# LANGUAGE TemplateHaskell#-} at the beginning, which bumps the code up to 97 bytes.

Here are a few example expressions for FOO, and the size of the resulting binary:

FOO FOO size Total size Binary size

-------------------------------------------------

(2^10) 6B 89B 1.1MB

(2^15) 6B 89B 3.6MB

(2^17) 6B 89B 12MB

(2^18) 6B 89B 23MB

(2^19) 6B 89B 44MB

I ran out of RAM compiling with (2^20).

We can also make an infinite list, using repeat instead of replicate FOO, but that prevents the compiler from halting ;)